Kubernetes isn’t even easy to pronounce, much less explain. So we recently illuminated how to demystify Kubernetes in plain English, so that a wide audience can understand it. (We also noted that the pronunciation may vary a bit, and that’s OK.)

[ See our related article, How to explain Kubernetes in plain English. ]

Of course, helping your organization understand Kubernetes isn’t the same thing as helping everyone understand why Kubernetes – and orchestration tools in general – are necessary in the first place.

If you need to make the case for microservices, for example, you’re pitching an architectural approach to building and operating software, not a particular platform for doing so. With Kubernetes, you need to do both: Pitch orchestration as a means of effectively managing containers (and, increasingly, containerized microservices) and Kubernetes as the right platform for doing so.

Why orchestration is necessary

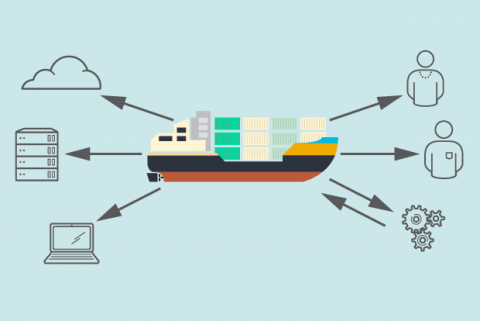

As we’ve noted before, it might be “easy” to deploy a container, but operating containers at scale is anything but. It’s quickly become consensus wisdom that an orchestration tool (sometimes called a container management tool or variations thereof) is a prerequisite for long-term success with containers.

When making the case for an orchestrator, it boils down to this: Using one enables greater automation, repeatability, and definability within your environment, while reducing a potentially crushing burden of manual work, especially as your container adoption grows.

“Without an orchestration framework of some sort, you’ve just got services running ‘somewhere’ – where you set them to run, manually – and if you lose a node or something crashes, it’s manual [work] to fix it,” says Sean Suchter, co-founder and CTO of Pepperdata. “With an orchestration framework, you declare how you want your environment to look, and the framework makes it look like that.”

Suchter adds that an orchestration platform is especially beneficial when running microservices in containers. By definition – the hint is in the prefix “micro-” – you tend to end up with a ton of containerized microservices over time. An orchestration tool like Kubernetes that is designed to deal with that operational complexity is an enormous asset.

Joe Beda, co-founder and CTO and Heptio, notes that orchestration unlocks one of the big-picture promises of microservices: Enabling small teams to solve big problems. Beda, one of Kubernetes’ original developers during his time at Google, gave a keynote in this vein at Linuxcon + Containercon North America 2016, called “The Operations Dividend.”

Here’s the elevator-pitch version, according to Beda: “Developer teams work best when they are small and the problem space that they are working on is bounded. This is one of the reasons why things move so fast at the start of a project (or company) but slow down over time,” he says. “Microservices is a way to think about scaling human teams to handle large problems by keeping each team small with well-defined interfaces.”

But there are associated costs with microservices architecture, including the potential for considerable complexity as your environment changes and grows.

“The amount of effort to run a new thing in production is not zero. In fact, in many environments the time to set up a simple ‘hello world’ service is huge,” Beda says. “To responsibly deploy microservices, you must balance the cost and complexity of running a new service with the productivity benefit of enabling smaller teams.”

Enter cloud-native orchestration tools like Kubernetes.

“These tools allow operations teams, or those wearing the ops hat, to be more effective,” Beda explains. “Reducing the human cost of running services – and better tooling to have insight into what is going on – pays dividends in terms of allowing more services and hence smaller teams. When applied appropriately, this enables applications to be developed and evolved faster with higher quality.”

Use these factors to make the case for Kubernetes as a wise choice:

Kubernetes is the “Goldilocks” of orchestrators

Beda likens Kubernetes to Goldilocks: It’s just right.

“It does a good job of being higher-level than VMs but lower-level than more restrictive PaaS systems,” Beda says. “It is still a set of infrastructure building blocks, but without many of the annoying aspects of VMs. It is also very much toolable like other infrastructure services. But Kubernetes and containers [in general] are still flexible enough to meet the needs of a wide range of users.”

Kubernetes is flexible

Indeed, that flexibility is another huge draw. Beda compares Kubernetes to the Linux kernel, in that it can run in a very wide range of environments. Just as Linux runs on everything from phones to IoT devices to mainframes and more, Kubernetes is similarly flexible.

“Kubernetes can be used in many different environments. This might include as an implementation detail of a datacenter appliance, or it might be as a DevOps tool for a small company or department,” Beda says, offering examples of the use cases. “But it can also scale up to be the backbone for how an enterprise delivers IT across a wide range of groups.”

Kubernetes makes complexity manageable

Again, the associated costs of containers and microservices include the potential for increased – and continually increasing – complexity of containers (and containerized microservices). Kubernetes is a significant weapon on that front.

“Kubernetes implements a real ideal of ‘hands-off’ management,” Suchter says. “It allows you to describe the desired running state of your production and it rapidly takes actions to make your desired state the reality.”

Kubernetes is supported by an active community

In addition to its flexibility, Suchter notes that Kubernetes is “extensible and has a very active community. These are the hallmarks of a winning open source infrastructure project.”

Beda describes the Kubernetes community simply: “Awesome.”

There are all manner of ways to back that assessment up: Dan Kohn, executive director of Cloud Native Computing Foundation, which houses Kubernetes, notes in this blog post: “You can pick your preferred statistic. I prefer to just think of it as one of the fastest-moving projects in open source history.”

Here’s a recent one: Kubernetes is the most-discussed Github repository during the past year.

A pretty good beta tester

Kubernetes had a well-known beta tester: Google. If it handled the scope and scale of Google production systems, it can probably handle yours, too.

“Kubernetes came out of a lot of concepts that were first tried out in Google’s production [environments] and refined over many years,” Suchter says. “As such, it’s really well thought-out.”

And now, it’s backed by that highly engaged open source community and companies like Red Hat. Which brings us to...

Kubernetes has big-name supporters

Arvind Soni, VP of product at Netsil, points out something that should get the attention of CIOs and other IT leaders loathe to get locked in with a single vendor or platform: Just about every major provider in the overlapping worlds of containers and cloud – public, private, and hybrid – supports Kubernetes. Score another point for short-term and long-term flexibility. It also may make it a no-brainer for IT shops managing multi-cloud environments.

Chances are, parts of your organization are already using multiple cloud providers. That’s because, as Red Hat technology evangelist Gordon Haff writes, IT’s thinking about data portability across clouds has evolved greatly.

The face of today’s hybrid cloud “really can be summed up as choice — choice to select the most appropriate types of infrastructure and services, and choice to move applications and data from one location to another when you want to,” Haff writes. That feeds the popularity of containers and Kubernetes for developing new applications.

[ Want to learn more about containers’ role in hybrid IT environments? Also read: Containers aren't just for applications, and The changing face of hybrid cloud, by Gordon Haff. ]