In the continuing IT balancing act between development and operations teams, new technologies are constantly emerging and proceeding through a gauntlet that runs between the two groups. The sides generally line up like this:

Developers

- This is new. I’m excited. Can I use this by myself?

- How can I develop a prototype?

- Can I automate this?

- Can I do self-service, including patches?

Operations

- This is new to me. Will I get the blame if it doesn’t work?

- How does this technology work – and is it proven?

- Do we have time to take this on and support it?

- How do you secure it against viruses?

With all the questions on either side, someone needs to bridge the divides between the two groups. The CTO can help by essentially working as a technical liaison to lay out the technical side to operations and the operations side to the development group.

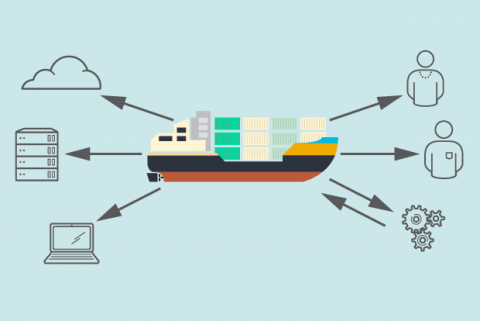

Enter containers, lightweight, nimble virtual machines that are billed as easy to operate, easy to deploy and easy to manage. As a platform for distributed applications, they are a new way to slice up virtual machines into self-contained application development areas (thus the name). A typical laptop can run 10 to 100 containers easily, and a server upwards of 1,000. Containers were founded in Linux technology some years back, and today they're often associated with the platform called Docker.

Our developers were excited about container technology, but operations worried that it was the next shiny object and that they would be left with operational challenges. I see three significant benefits to everyone:

- Cost savings: In traditional development, you develop, test and then deploy. With containers, you can deploy straight from the test environment into the operational environment, and the application will run the same way. This represents a significant savings in cost and time for development.

- Flexibility: Beyond being lightweight and nimble, container performance as we’ve measured it is almost like running on bare metal. We’ve seen almost no performance degradation using containers, so they give us a lot of flexibility.

- Ease of operations and security: Because containerized code is running in containers, not on raw hardware, you can take the same code and operate it across many different environments. That’s a big plus. Security also appears to be strong based on containers’ open source software construction.

Naturally, many of these benefits do not become real until the organization uses the technology, builds prototypes, and socializes it. That’s why our discussions were designed to get our operations people to test and understand container technologies. This was particularly important because containers are part of a much broader trend in IT – automating the infrastructure. Any new cloud startup, or any startup that works on the cloud, automates their infrastructure from the get-go. That’s not how an enterprise-built software stack and operations stack work, so initial resistance or skepticism is natural.

Fast-forward to 2015. We have been actively using container technology for about a year and are ready to implement it across the organization. And I’m pleased to report that by now both the development and operations groups are on board and are benefiting from this.

Tom Soderstrom serves as the Chief Technology and Innovation Officer, in the Office of the CIO at NASA's Jet Propulsion Laboratory in Los Angeles, CA and a member of the Enterprisers Editorial Board. JPL is the lead U.S. center for robotic exploration of the solar system and conducts major programs in space-based Earth sciences. JPL currently has several dozen aircraft and instruments conducting active missions in and outside of our solar system.